Writing is really, really hard. Learning to write even more so. Robert Kellogg – cognitive psychologist and author of The Psychology of Writing – describes writing as the cognitive equivalent of digging ditches. Learning to write is the hardest thing we ask children to do in schools, yet despite the resolute focus on curriculum in England, few schools have a well-developed writing curriculum. Schools might have a plan for when specific books will be taught and use these as a stimulus for devising writing tasks. However, in terms of being really clear about what component writing knowledge is taught when, and why, and how this progressively builds in complexity over time, I venture to suggest that in many schools, this is not yet as developed as it could be. There is a degree of the horse following the cart in having a writing curriculum developed to fit in with text choice rather than text choice for writing exemplifying increasing complexity over time of sentence construction, authorial voice and so on.

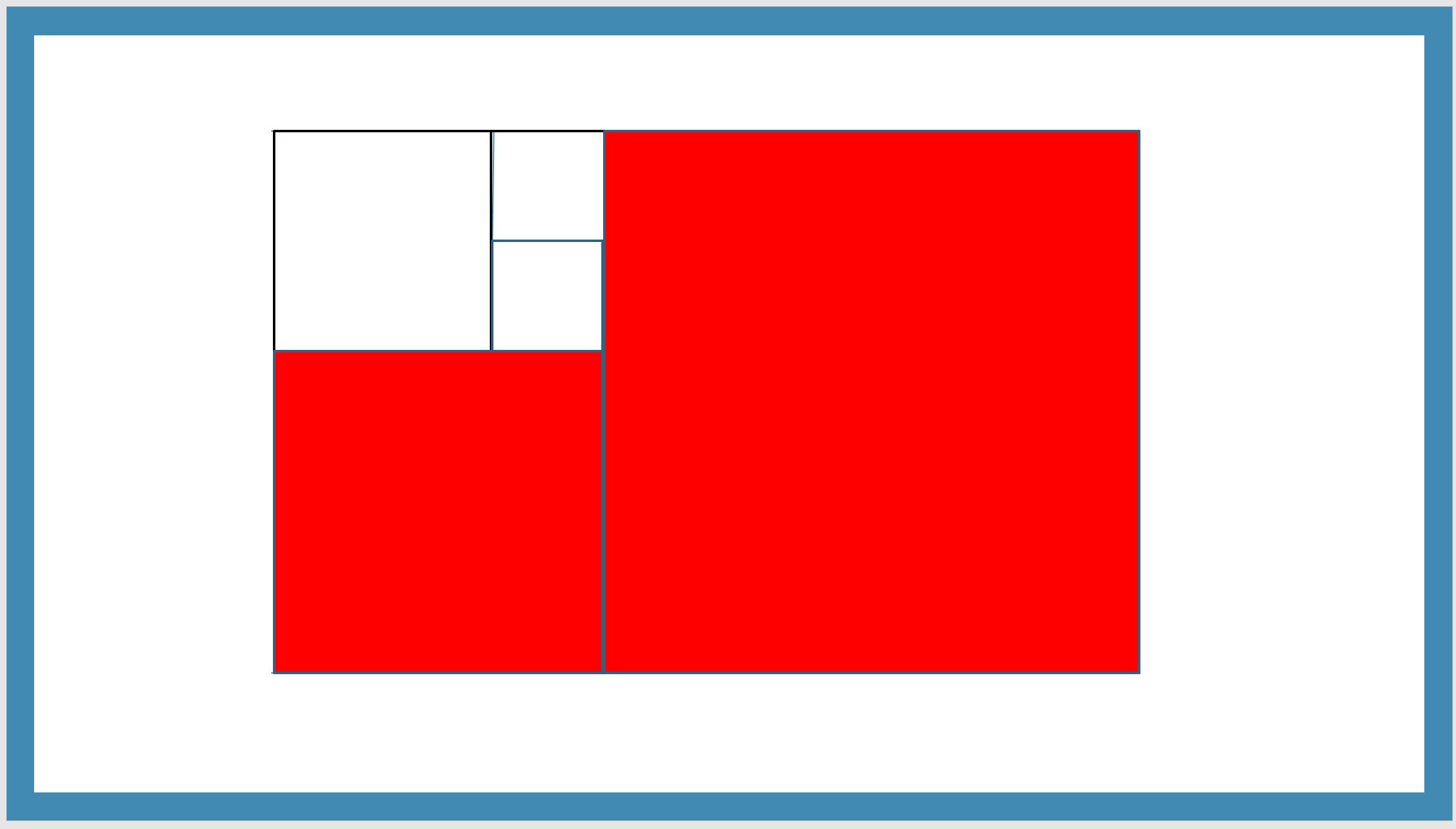

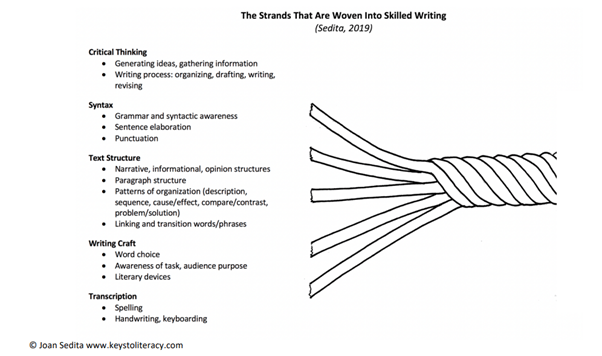

A central problem is a lack of clarity about what the components of the writing process actually are. On top of that, there is the perhaps even more thorny issues of how to balance practising these components in relative isolation so that children can develop fluency and accuracy in their application alongside how to integrate these skills together in creative writing for a real audience. Education fashion waxes and wanes in emphasizing one or other aspect, when, to state the tiresomely obvious, children need both and need them taught in a way where the technical enables the creative. Teaching the technical without it feeding into the creative is pointless. Teaching the creative without building technical competence is futile.

Because writing is such hard work, motivating children to do the work necessary to learn how to do it well is a challenge. The profession tends to come up with one of two solutions to the motivation problem; either make sure children have all the tools they need with to be successful before expecting much by the way of cognitive ditch digging or try and make ditch digging seem irresistibly glamorous – ‘imagine all those crops you will irrigate!’ – so that the effort seems purposeful while skating over learning any tedious mechanics of how to wield your spade effectively.

If we focus too much on the allure of the final product without teaching children how to develop technical proficiency, then we risk demotivating children because it the whole process becomes impossibly hard. If we focus on developing one process at a time, we remove the motivational effects of producing an authentic piece of writing that someone else might find interesting. There is a sweet spot somewhere that harnesses the benefits of both. There is also a whatever the opposite of a sweet spot is– a sour spot? – where neither source of motivation is leveraged.

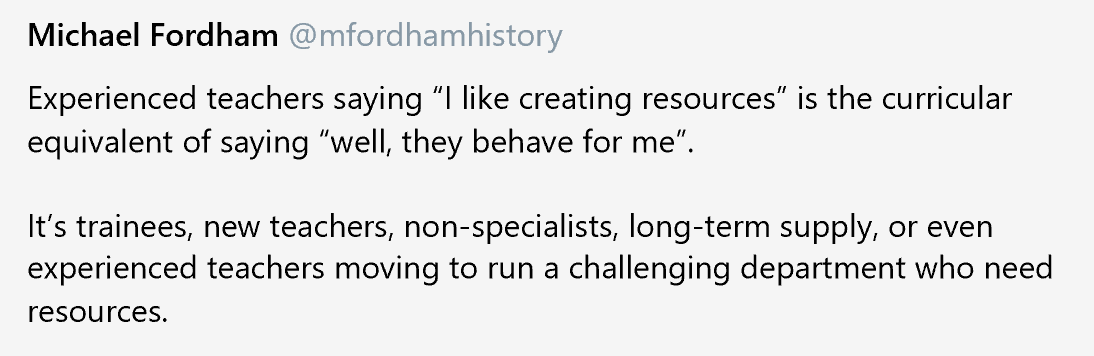

There are probably several reasons why schools might occupy this sour spot. To give curriculum time to both the development of fluency in the various components of writing and to the production of high-quality authentic pieces of writing consumes a hefty amount of a finite and already stretched timetable. The accountability measures processes in England, be they SATs at the end of year 6 or GCSEs at the end of year 11, do not obviously incentivise schools to conceptualise writing as a journey from the technical to the creative. For example, primary schools might teach grammar in order for children to pass the SPAG test, without drawing sufficient attention to why an author might choose to use a fronted adverbial or embedded clause. A primary school might also make the calculation that spending a lot of time teaching spelling is not a good investment since the marks awarded for spelling are relatively few. Punitive accountability systems distort understanding of what the building blocks of developing as a writer actually are, with schools mistaking the requirements of high stakes assessments for a curriculum. So for example, the Ofsted subject report for English described how ‘external assessments at both primary and secondary level unhelpfully shape the curriculum.’[1]

- Schools expect pupils to repeatedly attempt complex tasks that replicate national curriculum tests and exams. This is at the expense of first making sure that pupils are taught, and securely know, the underlying knowledge they need.

- Some pupils are given considerable help to access these complex tasks, wasting precious time and resources on activities that do not result in them making progress.

- [Secondary] schools do not always identify the grammatical and syntactical knowledge to be taught for writing, and so do not build on what has been taught at primary school. Instead, written tasks are often modelled on GCSE-style assessments.[2]

What is this underlying knowledge that children need to develop into competent writers? How to we teach this knowledge in a way that feeds into and enables the creative? When do we need to work on component knowledge in isolation and when do we integrate this knowledge within complex, creative tasks? To understand this, I think it is useful to unpick what we mean by knowledge in term so the English curriculum and what the journey from knowledge to skills (aka procedural knowledge) looks like.

It’s not so long ago that teachers use to say things like ‘English is a skills subject. You can’t really talk about knowledge in English.’ These days teachers might accept this is not quite right but still struggle to unpick what the knowledge is within English and how it relates to writing creatively.

Let’s start by reminding ourselves about the different kinds of knowledge. The Ofsted subject report talk of foundational knowledge. However, I think it is useful to break this down further. First of all we substantive knowledge. Substantive knowledge can be categorised as either conceptual knowledge or procedural knowledge – what is often referred to as a skill. Conceptual knowledge is about knowing that…. and procedural knowledge is about knowing how to…. So for example, using learning about metaphors we could unpack this as follows:

| Substantive knowledge | |

| Conceptual… know that… because… | Metaphors are ways of describing something by referring to something else which is the same in a particular way. |

| Procedural… know how to… and be able to… (Skills: involves a verb) | Identify the use of metaphor in a text. Explain in what way a metaphor is the same as the thing it describes. |

To be clear, children are not expected to be able to parrot the conceptual form of words above. They need to be able to understand what a metaphor is. They don’t necessarily need to use this exact formulation of words to explain what it is.

Or

| Substantive knowledge | |

| Conceptual… know that… because… | A sentence must contain a subject and a verb. |

| Procedural… know how to… and be able to… (Skills: involves a verb) | Write a simple sentence that contains a subject and a verb and identify both. Identify a fragment and expand it into a sentence . |

Or

| Substantive knowledge | |

| Conceptual… know that… because… | When writing lower case h, the ascender needs to be tall enough to distinguish it from an n and that to form the letter you start at the top of the ascender. |

| Procedural… know how to… and be able to… (Skills: involves a verb) | Be able to write the letter h correctly. |

So far, so very technical. What about creativity and meaning, I hear you ask? This is where disciplinary knowledge comes in. Disciplinary knowledge in English pivots around the interactions of authors and audiences and can also be subdivided into conceptual and procedural knowledge. To return to the examples above:

| | Substantive knowledge | Disciplinary knowledge |

| Conceptual… know that… because… | Metaphors are ways of describing something by referring to something else which is the same in a particular way. | Know that the purpose of a metaphor is to help the reader understand something more clearly. Know that people may differ in their choice of metaphors to describe a particular thing (‘In English there are many right answers’). |

| Procedural… know how to… and be able to… (Skills: involves a verb) | Identify the use of metaphor in a text. Explain in what way a metaphor is the same as the thing it describes. | Evaluate the effectiveness of a metaphor in various authors’ work. Use metaphor in their own writing to develop the reader’s understanding of character. Evaluate one’s own use of metaphor. |

The teaching sequence is something like this – though some steps could probably be swapped around, particularly steps 1 and 2. A ‘teaching sequence’ could mean anything from a part of a lesson to a topic of work to something returned to again and again over many years. The basic concept of metaphor might be understood by a chid in year 2. However, over time, in a coherent, connected curriculum, the examples of metaphor to which a child is exposed will illustrate increasingly more complex ideas. Without such enabling knowledge, the chance of children devising interesting or provocative metaphors themselves is remote.

| Substantive knowledge | Disciplinary knowledge | |

| Conceptual… know that… because… | We start off sharing examples of metaphors and explaining what a metaphor is. | While also explaining why they can help readers understand more clearly. |

| Procedural… know how to… and be able to… (Skills: involves a verb) | We then help children identify metaphors in texts.We then help children explain in what way a metaphor is the same as the thing it describes. | We then evaluate the effectiveness of specific metaphors in selected texts.Then children use metaphors in their own writing to develop the reader’s understanding of character. Then children evaluate the effectiveness of the metaphors they and their classmates have used, possibly making changes to their own work. |

The sequence goes something like this:

- Explain and exemplify a thing (substantive, conceptual knowledge)

- Explain why it is important to readers (conceptual, disciplinary knowledge)

- Practice identifying the thing and working with the thing on a technical level (procedural, substantive knowledge)

- Use the thing to actually communicate meaning to an audience (procedural, disciplinary knowledge)

- Evaluate if what you’ve written does the job it is intended to do to help the reader (procedural , disciplinary knowledge)

- Revisit all of this several times in different contexts

- Later, consciously do this alongside a whole lot of other things you’ve learnt, when and only when it is appropriate for the reader (rather than the demands of the mark scheme) to do so

Here’s another example:

| | Substantive knowledge | Disciplinary knowledge |

| Conceptual… know that… because… | A sentence must contain a subject and a verb. | Know that without both a subject and a verb, the reader will find it hard to make sense of what we are writing. |

| Procedural… know how to… and be able to… (Skills: involves a verb) | Write a simple sentence that contains a subject and a verb and identify both. Identify a fragment and expand it into a sentence. | Write simple sentences for a communicative purpose. Check that these make sense to a reader. Make changes where necessary. |

And here it is as a teaching sequence.

| Substantive knowledge | Disciplinary knowledge | |

| Conceptual… know that… because… | We start off sharing examples of simple sentences, explaining they always have a subject and a verb and identifying these. | While also explaining the reader can’t make sense of what we are writing unless both are included. |

| Procedural… know how to… and be able to… (Skills: involves a verb) | We then help children identify subjects and verbs and where they are absent.We then help children expand fragments into complete sentences. | Then children write simple sentences to describe a picture or animation to someone else who may not have seen it. Then children evaluate the sentences they have written to check that they make sense (because they include both a subject and a verb), making changes where necessary. |

The journey is one of developing technical proficiency as an enabler of creative communication.

Here’s another example:

| | Substantive knowledge | Disciplinary knowledge |

| Conceptual… know that… because… | When writing lower case h, the ascender needs to be tall enough to distinguish it from an n and that to form the letter you start at the top of the ascender. | It is important to write letters clearly because readers want to read our writing. If it is hard to tell which letter is which, they might find it too hard and give up. If we have a stable seating position, effective pencil grip and form our letter correctly, with practice it will become easy for us to write. |

| Procedural… know how to… and be able to… (Skills: involves a verb) | Be able to write the letter h correctly. Notice when my formation of the letter h is insufficiently clear and correct when practicing letter formation. | Notice when my formation of the letter h is insufficiently clear and correct when writing independently. Write legibly when communicating in writing. |

And another:

| | Substantive knowledge | Disciplinary knowledge |

| Conceptual… know that… because… | Know that in nonfiction writing a paragraph usually needs to start with a topic sentence and then include further sentences with supporting detail. | Know that the topic sentence helps the reader understand what the paragraph is going to be about and the supporting detail explains the topic sentence more fully, by giving more evidence or explaining the reason why or the stages in a process. Know that a plan can help us identify the supporting detail we need to include to ensure that our paragraph explains or topic sentence to a reader. And/or Know that as we are writing, we should check that we are including sufficient supporting detail to explain our topic sentence to a reader. |

| Procedural… know how to… and be able to… (Skills: involves a verb) | Given a series of sentences, identify which should be the topic sentence and which should be the supporting detail. Given two topic sentences, select relevant supporting details from a list. Given a topic sentence, generate supporting detail. Given supporting details, generate a suitable topic sentence[3]. | Be able to write a plan in note form for a paragraph. Be able to write a well-structured nonfiction paragraph and evaluate its effectiveness in communicating meaning to the non-present reader. |

I think for this one, some of the conceptual disciplinary knowledge comes after practising the procedural disciplinary knowledge.

And just in case you are wondering what this might look like for reading:

| | Substantive knowledge | Disciplinary knowledge |

| Conceptual… know that… because… | Know that authors deliberately leave out information when they write for two different reasons: because they assume the reader already knows something and putting in too much obvious information would be boring; in order to make the story more interesting by keeping the reader guessing. | Know that when we are reading, we should listen in our head to check that what we are reading makes sense. If it does not make sense to us, we should go back and reread the sentence or paragraph again. |

| Procedural… know how to… and be able to… (Skills: involves a verb) | Identify in a paragraph where the author has left out information to keep the reader guessing. | Check what we are reading makes sense to us when we are reading and take appropriate action when it doesn’t. |

English teaching spans a variety of disciplines or, to use the language of Ruth Ashby and Christine Counsell, disciplinary quests:

‘Each subject is… a product and an account of an ongoing truth quest, whether through empirical testing in science, argumentation in philosophy/history, logic in mathematics or beauty in the arts.’[4]

Ruth Ashby expands further on this idea of disciplines as quests and identifies four different category of quest. English spans all of these:[5]

- The descriptive quest seeks to describe reality using empirically derived facts and logic to either confirm or replace accepted theory. Its quest therefore is for a single, universally agreed though provisional truth. Truth seeking in much of science and maths follows a descriptive quest. Within English, phonics, letter formation, technical definitions and some aspects of grammar are givens. The pronunciation of the grapheme <e> may vary, but the set of variances is a bounded one, with limits.

- The interpretive quest seeks to interpret reality through discussion and argumentation. There is no expectation of the possibility of a single truth around which all agree. Truth seeking in much of history, religious education and human geography follows an interpretive quest, as does the interpretation of literature in English.

- The expressive quest seeks to express truths, often through the medium of the arts. In English, creative writing follows an expressive quest. Here there are many truths and popular acclaim is as valid as scholarly opinion.

- The problem-solving quest seeks to find solutions to problems, such as created within design and technology, computing and so on. Within English, a set of instruction that successfully enables a reader to accomplish a task is an example of English in problem solving mode.

This quotation by C.R Milne expresses how the descriptive and the expressive perform different roles, the one giving us a universal truth, the other an invitation to a more personal truth. Within the discipline of English, both have a valued place.

‘The astronomer may tell us something about the moon, but so too does the poet. The astronomer’s moon is everybody’s moon; the poet’s is very much his own and not everyone can share it.’[6]

Within English, there is substantive knowledge that is often descriptive in this sense and not up for interpretation (‘everybody’s moon’). Something either is or is not a sentence or a correct spelling or a metaphor or a paragraph. This is usually something everybody agrees on. Then there is disciplinary knowledge and this is quite different. This can be expressive, or interpretive or – and here I build on Ruth Ashby’s work – metacognitive. Here there are wrong answers, certainly but also many right answers (one’s own moon). The substantive is the enabler of the disciplinary. The disciplinary puts the substantive to work and provides the rationale for learning it.

We can summarise this as follows:

| | Substantive knowledge | Disciplinary knowledge |

| Knowledge as descriptive. (Often there is one, agreed answer) | Knowledge as interpretive, expressive or metacognitive. (There may be many right answers) | |

| Conceptual… know that… because… | Knowing various rules, techniques, structures, conventions and vocabulary that authors and speakers use to make meaning. | Knowing that when we study English, we are studying how authors and speakers – including ourselves – try to make meaning to share with an audience. Know that when writing, authors are usually communicating with a non-present reader and this brings particular challenges both when we write (as we have to bear in mind the needs of the non-present reader) and when we read (as we have to work to make sense of what the writer has written.) Know that to communicate meaning effectively, writing or speech needs to be clear and orientated towards its intended audience in a way that is either interesting, informative, persuasive or provocative. [7] This is so that the audience thinks it is worthwhile putting in effort to try to understand what the author is saying. Know that therefore we should monitor and evaluate our own writing and speech to check that the meaning is suitably clear, and its intended audience will find it interesting, informative, persuasive or provocative. Know that the meaning an author intends is open to interpretation. |

| Procedural… know how to… and be able to… (Skills: involves a verb) | Identifying these rules, techniques, structures and vocabulary in texts and in speech. Using these component rules, techniques, structures and vocabulary in discrete tasks through developing accuracy and fluency rather than for a specific audience. Procedural knowledge, or skills development, is about enabling subsequent creativity by ensuring learners have the necessary tools to communicate with an audience in a way that is clear and interesting, informative, persuasive or provocative. | Engage in creative meaning produced for an audience through the interpretation and production of texts and talk that integrate rules, structures and vocabulary that is clear and either interesting, informative, persuasive or provocative. Monitoring and evaluating our own meaning making both whether we are audience or author. Interpretation and evaluation of the meaning making of others. |

When I started teaching in the late ‘80s, teaching writing was exclusively focused on the disciplinary – having something to say and publishing your work for an audience, with a little bit of redrafting and refining thrown it (by writing work out ‘in best’). Writing was either to be made into a book or put on display – allegedly for peers to read. We did not teach spelling, derided as mere mechanics, but did teach handwriting – the visual appearance of texts being valued as a way of enticing the reader. We did not explicitly teach text structures though we did read to children a lot of really high-quality literature. Non-fiction did not feature very much. Some children did very well. Some did incredibly badly and not having been taught phonics, were completely unable to write anything remotely comprehensible. Thus their inner author was not set free, as the approach purported to enable, but was securely shackled by their ignorance and the ideological idealism of their teachers.

Then in 1996, the National Literacy Taskforce was established, ushering in the National Literacy Project and then Strategy. This really focused on phonics for both reading and spelling, text structures, including a whole gamut of non-fiction text types, and knowledge of written language. For example, the excellent publication Grammar for Writing was a game changer, providing well thought out strategies for teaching children to write well at the sentence level. These were exciting times. Levels of attainment shot up. However, this shift came with trade-offs, the school day only having a finite number of hours. Because teachers were spending more time on phonics, sentence and text structure, the emphasis on sharing texts with an audience beyond one’s teacher reduced.

There was, however, a new ‘audience’ in town, and one that had demanding requirements. Writing standard assessment tasks – SATs were introduced in 1995, ushering in an era of the marker as prime audience. Being held accountable for standards of writing did help to improve attainment – but over time the emphasis on text structure – or genre – began to eclipse all other elements, aided and abetted by the requirements of the National Curriculum of the time. The abolition of the writing SAT in 2012 did not actually change this, since it was replaced by a system of moderated teacher assessment that was just as heavily focused on genre. Even when the National Curriculum removed the requirement to study numerous genres, the focus of teaching multiple genres lingered on.

Over time, learning to write morphed into learning how to achieve the requirements of the teacher assessment framework. This involved producing writing that ticked various boxes. Writing became an exercise in producing something that enabled boxes to be ticked. Teaching writing turned into a process where the teacher shared some writing and showed how it ticked various boxes – maybe with some discrete teaching on how to tick a particular box – and then children were given a shopping list of things that they had to include in order for their audience – the mark scheme – to be satisfied. Redrafting work in order to rectify unticked boxed also became a big thing, accompanied by angst about how independently this had been done.

The dictatorship of the mark scheme as audience meant that a genuine sense of writing for an actual reader disappeared. Choices about vocabulary, syntax, structure or syntax were replaced by choices based on harvesting marks. When writing was assessed by Sats, spelling hardly contributed any marks at all, with a concomitant lack of emphasis in terms of curriculum time. Ditto handwriting.

The introduction of the SPAG test (that’s spelling, punctuation and grammar to those who do not know the joys of this assessment) did help shape teaching away from being almost exclusively focused on text level structure and coherence towards thinking a bit about the sentence. It did mean children were actually taught about grammar and about different ways of structuring sentences. This was an improvement. There were however two problems. The first was that the scope of the grammatical features 11-year-olds were meant to be able to identify strayed beyond the useful and into the abstruse. I’m old enough to have been taught grammar at school and to have learned Latin. However some of the knowledge children are tested on gets me second guessing myself. For example, the difference between a propositional or adverbial phrase or between an embedded or relative clause does not come to me automatically but something I have to talk myself through. Linked to this is the second problem. The test is great at getting children to be able to feature spot. It is less good at getting children to be able to use features purposefully in their writing. It was however, eminently teachable. At the school where I was headteacher, despite our challenging demographic, our scores in the SPAG test were sky high. This did not translate to children deliberately choosing to use embedded clauses or prepositional phrases to make their writing clearer or more interesting for the reader. The sense of a reader as a reader – as opposed to marker – had completely disappeared. The inner author was better equipped and hence freer than when explicit teaching was frowned upon. It was, however, a rather limited kind of freedom.

The result of all these different approaches is that a relatively large minority of children are not very motivated to write because either they have not been given all the tools they need with which to be successful and the effort involves seems to far outweigh any reward or the enterprise lacks meaning – digging ditches in order to dig ditches.

There have been various approaches to motivate children to undertake the hard work necessary in learning to write. The first of these involves the quest for the most irresistible stimulus about which children will be so desperate to write that, adherents of this approach believe, all other concerns fall away. Give something exciting to write about and the other barriers that make writing hard will magically fall away, or at least become less of a hurdle. A second is the reward of an audience who will appreciate and admire your work. A third is the satisfaction of producing something beautiful. A fourth is amplifying the importance of writing for future exams and employment success. All of these will work to some degree for some children and all of them have some merit. However, collectively they fail to get to the root of the problem. They all try to motivate children to do something hard by dangling a reward at the end of the process. None of them seek to make the process less arduous in the first place.

Producing a piece of text involves doing several different things at once. The cognitive load involved in trying to orchestrate all of these in rapid succession is immense. A different solution is to isolate each component, and teach these separately initially, so that finite cognitive resources are only having to focus on one thing at a time. Children experience success which is highly motivating. Then, when at least some of these processes have become automated, children can begin to integrate the various process together.

The drawback of this approach is the inverse of those outlined above. By focusing on one process at a time, it effectively removes the motivational effects of producing an actual piece of writing. For some processes, it also makes the reference point of the reader more tangential. Yes, when you are learning to spell, you are learning to spell in order that a reader can understand your writing. That’s less obvious that when you write an actual piece of text for a specific audience.

However, this drawback can be remediated by providing scaffolding that does the work of some of the processes for the learner, allowing learners to concentrate on just one or two processes at a time while still enjoying the motivational benefits of creating something worthwhile that is shared with others. For example, while Reception children need to focus a great deal of time in developing competence with the transcriptional enablers of technology, this should not mean that they are not also able to spend time composing for an audience, whether orally or via using drawing and possibly emergent writing. For example, the app Chatta provides an easy way for children to record, replay and share their early attempts at writing sentences.

Striking a balance between devoting time to working on discrete components in isolation and time to use components together in more complex tasks of meaning making is the key curriculum challenge when teaching writing. Different schools may come up with slightly different emphases. We should be wary of decrying those who deviate ever so slightly from our preferred solutions with a little more or a little less of the component or the complex than our own answers to this problem. For example, I would see both the work of Andrew Percival and Stanley Road Primary in Oldham and the work of Ross Young and Felicity Ferguson at The Writing for Pleasure Centre as thoughtful responses to trying to give appropriate weighting to both the technical and the creative, (though I tend more to Team Percival, for now at any rate.)

[1] Telling the story: the English education subject report – GOV.UK

[2] Telling the story: the English education subject report – GOV.UK

[3] This sequence is based on chapter X of The Writing Revolution

- [4] Counsell, C (2018) “Taking Curriculum Seriously” in Impact – Journal of the Chartered College of Teaching, 4:2018 available at: https://my.chartered.college/impact_article/taking-curriculum-seriously/

- [5] Ashbee, R (2021) “Curriculum Theory, Culture and the Subject Specialisms” Routledge

[6] This whole section is indebted to Article: Substantive and Disciplinary knowledge – e-Qualitas Teacher Training with particular thanks for the C.R.Milne quotation.

[7] I could have added in further adjectives such as analytic but decided for the sake of clarity to restrict myself to just four. Balancing the non-present and unknown reader’s desire for clarity with their potential desire for nuance and comprehensiveness is always an authorial challenge!

I’m hoping that you are finding this a bit taxing. There’s a lot to think about and you are a good way off being able to ‘read’ the time in the same way you can read your watch without thinking.

I’m hoping that you are finding this a bit taxing. There’s a lot to think about and you are a good way off being able to ‘read’ the time in the same way you can read your watch without thinking.