Last week I shared Victoria Morris’ (@MrsSTeaches) fantastic blog advising how to plan ks1 geography. Over 1770 people have already viewed that one, so I guess it fills a need. Here is part two which focuses (somewhat unsurprisingly) on ks2 geography. Like its predecessor, it assumes you either have to or want to base your curriculum on the English National Curriculum. Particular thanks to @EnserMark for his help for this one.

We hope to get ks1 history up here next week.

If you’d like Victoria to help you with your curriculum, please see last week’s post for details

Key Stage 2 Geography

As there is considerably more content in the KS2 curriculum, I have chosen to focus on the elements that affect how units could be chosen and sequenced. My not having mentioned certain objectives, or parts of objectives, does not indicate a lack of importance – it is because they would clearly warrant a unit of their own (such as rivers or energy). But this guide should not be seen as a comprehensive description of every element needed for a good geography curriculum, but guidance about how to sequence concepts logically and ensure learning builds progressively across the key stage.

In contrast to KS1, the KS2 geography curriculum offers a greater element of choice as to how it is covered. There are more options where particular regions and features can be selected for in depth study, and these decisions should be made while taking into account the principles outlined in the KS1 guide (your pupils’ backgrounds and those represented in the local area, the level of diversity your pupils are exposed to, the human and physical features present in the local area, and the history topics and English texts you have selected). There are also multiple ways in which the objectives could be grouped in order to build units of study. However, there are some considerations that can help you to create a structure within which to make these decisions.

Regional studies

There are choices to be made regarding regions selected for in depth study:

- understand geographical similarities and differences through the study of human and physical geography of a region of the United Kingdom, a region in a European country, and a region within North or South America

As in KS1, there is the option to combine the study of a region of the United Kingdom with using fieldwork to observe, measure, record and present human and physical features of the local area, or to select a contrasting region of the UK. However, note the difference in language – region indicates study of a larger area than just the town or county in which your school is located. There are nine regions of England (London, South East, South West, East of England, East Midlands, West Midlands, North East, Yorkshire and the Humber, North West) as well as the other countries in the UK.

A region in a European country, and a region within North or South America also need to be chosen. In addition to the possible reasons for making these choices above, it is important to note the aspects of physical and human geography that are specified in the National Curriculum. You may want to select particular regions that contain several of these features, in order to provide opportunities for children to reinforce their learning. For example, Texas includes three different biomes – desert, grasslands and temperate deciduous forest – and Italy contains a mountain range, three volcanoes and the River Po, which is significant to agriculture and industry.

Alternatively, you may find that once you have selected examples of the physical features, there are significant locations in Europe, North or South America that your pupils will not have otherwise met, that you wish to choose as a focus for regional study.

The United Kingdom

You will have already selected a region of the UK to study in depth, either in conjunction with or separately from the study of your school’s local area.

However, there is a considerable amount of locational knowledge relating to the UK, which will need to be taught over more than one unit of work if children are to understand and remember all the concepts listed.

How can you divide the UK locational knowledge into smaller units of work?

- As there are four countries of the UK and four year groups in KS2, one suggestion could be to have each year group focus on a different country, learning about all the key features listed (counties, major cities, geographical regions, human, physical and topographical features and land use patterns) in that country.

- Alternatively, to allow for more revisiting of places in England, you could allocate Wales, Scotland and Northern Ireland to Years 3 – 5, as well as allocating one or two regions of England to each of the four year groups. This would provide children with more opportunities to practise identifying major cities and counties of England on maps so that they can remember what they have learnt in the long term.

- You could map the different UK locations that are met throughout the key stage (eg. in history topics, as settings in key texts, referred to in any other foundation subject learning, visited on residential trips or through links with other schools) and allocate some time to identifying the features listed in the curriculum for that place.

- When you teach the local area study in history, consider taking the opportunity to link this with aspects of the geography of your local area, covering the requirement in the geography curriculum for children to identify how aspects of UK geography have changed over time. For example, if you are teaching the history of immigration to the East End of London, you could link this with changes in the Docklands over time, the geography of the River Thames and land use patterns in the area.

- Ensure that you are fully exploiting local places of interest that can enhance learning in the foundation subjects, and at the same time use these to develop children’s understanding of the geographical features of the local area and how these have changed over time. For example, if your school is within visiting distance of the home of a scientist who is significant to the KS2 programme of study, allocating some time to the history and geography of the location can provide rich cross-curricular learning opportunities.

- When you plan for units on physical features such as rivers and mountains, ensure that you include examples that are significant in both the UK as a whole and in your local area. For example, children should know that the River Severn (whose source is in Wales and estuary in England) is the longest river in the UK, but could also learn that the River Thames flows through London, which is the capital of England, and that the Firth of Forth is the estuary of several rivers and is near Edinburgh, the capital of Scotland. This is an opportunity to revise knowledge of the countries and capitals of the UK from KS1.

Links to Science

As in KS1, there are links to be made with the science curriculum. Part of the Year 4 study of states of matter includes learning about evaporation and condensation within the water cycle, so it would make sense to combine the science and geography aspects of the water cycle within one unit of work in Year 4. As rivers form a part of the water cycle, a logically sequenced curriculum would include rivers either later in Year 4 than the water cycle, or in a subsequent year group.

The physical processes of mountain formation, volcanoes and earthquakes all require knowledge of the structure of the earth, which it seems logical to teach after children have learnt about the science of rocks in Year 3. In addition, having an understanding of metals and changes of state is useful in this topic (as the earth’s core is made of molten metal and magma is molten rock), and these are taught in Year 5 materials science, so teaching mountains, volcanoes and earthquakes together late in Year 5 or in Year 6 maximises the opportunities to build on these scientific concepts.

Climate zones and biomes

The remaining elements of physical geography, climate zones and biomes, can be linked with the following locational knowledge objective:

- identify the position and significance of latitude, longitude, Equator, Northern Hemisphere, Southern Hemisphere, the Tropics of Cancer and Capricorn, Arctic and Antarctic Circle, the Prime/Greenwich Meridian and time zones (including day and night)

The Earth is split into three climate zones – polar, temperate and tropical – by the lines of latitude (including the Equator and Tropics of Cancer and Capricorn). These divide the world according to annual temperature and precipitation. Knowledge of lines of latitude also includes understanding that the Equator splits the Earth into the Northern and Southern Hemispheres, and that the Artic and Antarctic Circles are in the polar zones.

http://www.webquest.hawaii.edu/kahihi/sciencedictionary/C/climatezone.php

Biomes are distinct biological communities that have formed in response to a shared climate. It is important to note that biome is a broader term than habitat, as a biome may include many different habitats. Credit: https://en.wikipedia.org/wiki/Biome There are several different ways of classifying biomes, so it’s important to choose which one you will use as a school. A suggested list is: taiga, tundra, temperate deciduous forest, scrub forest, grassland, desert, tropical rainforest, temperate rainforest.

https://www.biology-pages.info/B/Biomes.html

Key features of biomes to teach are the annual temperature and precipitation data (including seasonal change), and how these affect the types of vegetation and animal species found there. Due to the link with habitats, ideally this unit should be taught after the Year 4 science on living things and their habitats. Knowledge of how climate relates to the living things that make up a biome feeds logically into the Year 6 science objective:

- identify how animals and plants are adapted to suit their environment in different ways and that adaptation may lead to evolution.

So ensuring children have a secure understanding of climate zones and biomes before this science is taught in Year 6 would ensure that they are fully prepared for this new learning.

The remaining elements of the locational knowledge objective – lines of longitude, the Greenwich/Prime Meridian, and time zones – can be taught either later in the same unit, or as a separate unit later in the key stage. Although for convenience time zones often follow national boundaries, they roughly correspond to the lines of longitude, of which the Prime Meridian is the most significant, as it marks 0 longitude. There is a link to Year 5 science on day and night – whilst it is not essential to teach time zones at the same time as this science knowledge, it would certainly make sense to have taught the basics of why we have day and night before teaching time zones. Therefore, this unit should ideally be sequenced after Year 5 Earth and Space science.

Table showing the earliest suggested year group in which to teach specific units, in order to achieve logical sequence of knowledge:

| Year group | When unit could first be included | |

| Year 4 | Climate zones/biomes (after Living things and their habitats science but before Adaptation in Year 6) | The Water Cycle (linked with science)

Rivers (after the water cycle) |

| Year 5 | Lines of longitude/time zones (after Earth & Space science) | Mountains, volcanoes & earthquakes (after materials science) |

World Locational Knowledge

In order for your children to have a thorough knowledge of the world’s countries (including their environmental regions, key physical and human characteristics and major cities), you will need to plan for plenty of opportunities for retrieval practice and introducing new countries. A useful way of providing additional chances to learn about the world’s countries could be to plan to include lessons that orientate children to countries that feature in other subjects. For example, when learning about the Ancient Romans starting with a lesson on the location and geographical features of Italy, and then following this with a lesson on Greece before learning about the Ancient Greeks, would give children a good understanding of the geography of the Mediterranean.

Aidan Severs (who blogs and tweets as @thatboycanteach) has produced a comprehensive list of questions to ask about places you study, which can be used to help teachers identify the content to include in these ‘orientation’ lessons. http://www.thatboycanteach.co.uk/2019/06/geography-key-questions-place-national-curriculum.html

Unit Checklist

Does the unit include opportunities for:

- Locating places on maps, globes and in atlases?

- Using mapwork skills (including compass points and grid references)?

- Fieldwork where possible? The Royal Geographical Society have produced some useful resources to support schools with planning for fieldwork. https://www.rgs.org/in-the-field/fieldwork-in-schools/

- Regular retrieval practice? For example, labelling countries, cities and geographical features learnt on maps, identifying features learnt on maps or aerial photographs of places or labelling key vocabulary on diagrams.

- Deepening children’s understanding of key physical (climate zones, biomes and vegetation belts, rivers, mountains, volcanoes and earthquake) and human (types of settlement and land use, economic activity including trade links, and the distribution of natural resources including energy, food, minerals and water) features?

- Deepening children’s understanding of important geographical concepts? Clare Sealy has created a list of the main concepts that should be taught in primary geography: atmosphere, climate, continent, landform, terrain, environment, resources, biome, fertile/fertility, vegetation, settlement, population, region, trade, development, sustainability, diversity. (Developed from the list originally mentioned in this blog https://primarytimery.com/2017/10/28/the-3d-curriculum-that-promotes-remembering/)

the knowledge vs skills debate is an argument about the extend to which skills are transferable from one context to another and not about whether or not these ‘skills’ are important.

the knowledge vs skills debate is an argument about the extend to which skills are transferable from one context to another and not about whether or not these ‘skills’ are important.

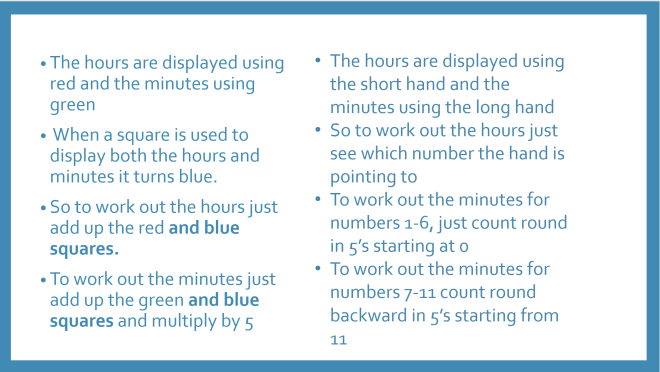

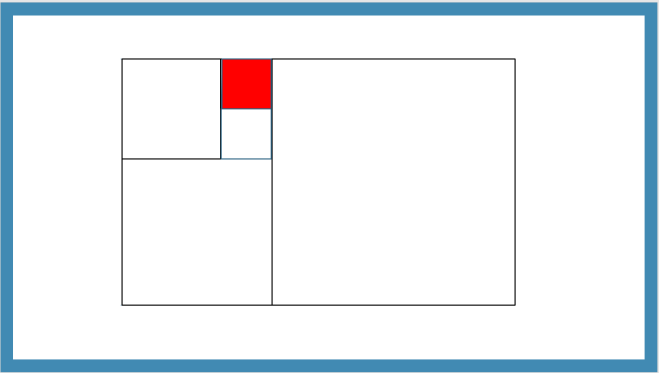

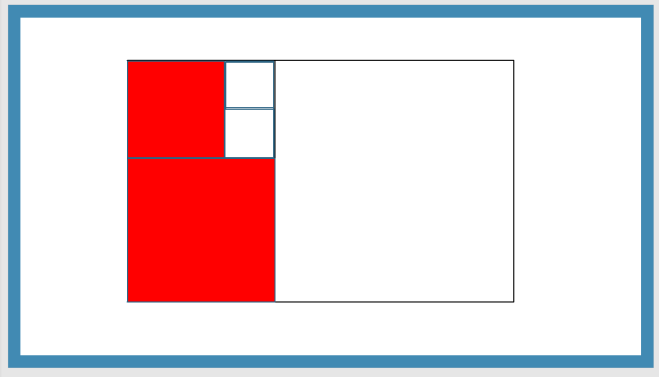

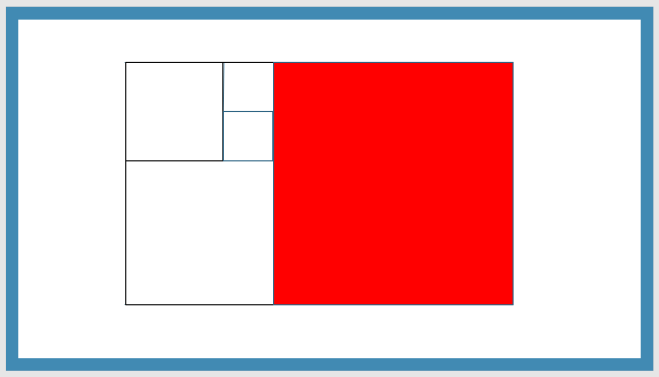

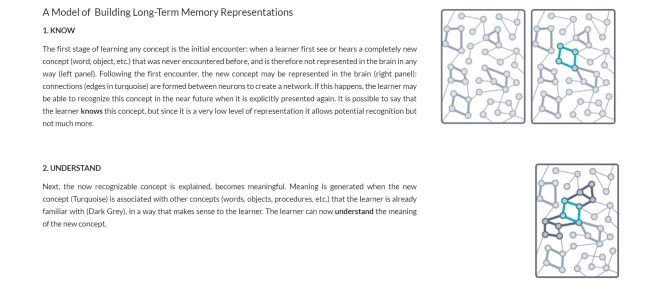

I’m hoping that you are finding this a bit taxing. There’s a lot to think about and you are a good way off being able to ‘read’ the time in the same way you can read your watch without thinking.

I’m hoping that you are finding this a bit taxing. There’s a lot to think about and you are a good way off being able to ‘read’ the time in the same way you can read your watch without thinking.